cgupta.tech

Is this tweet real, or generated by a neural network based on random noise?

Donald J. Trump

@realDonaldTrump

@realDonaldTrump

@realDonaldTrump

@realDonaldTrump

I strongly pressed President Putin twice about Russian meddling in our election. He vehemently denied it. I've already given my opinion.....

Streak: 0 Correct: 0/0

- A Reccurent Neural Network with LSTM nodes implementation for text generation, trained on Donald Trump tweets dataset using contextual labels, and can generate realistic ones from random noise.

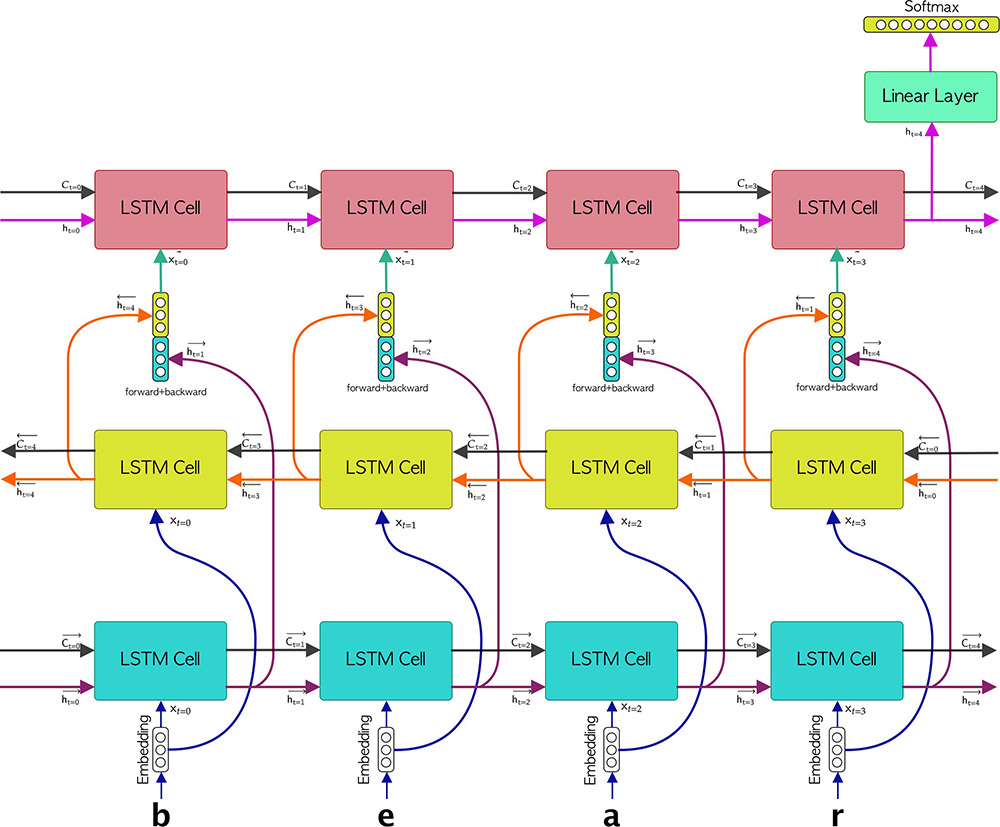

- Ability to use Bidirectional RNNs, techniques such as attention-weighting and skip-embedding.

- CuDNN implementation for training the RNNs on an nVidia GPU

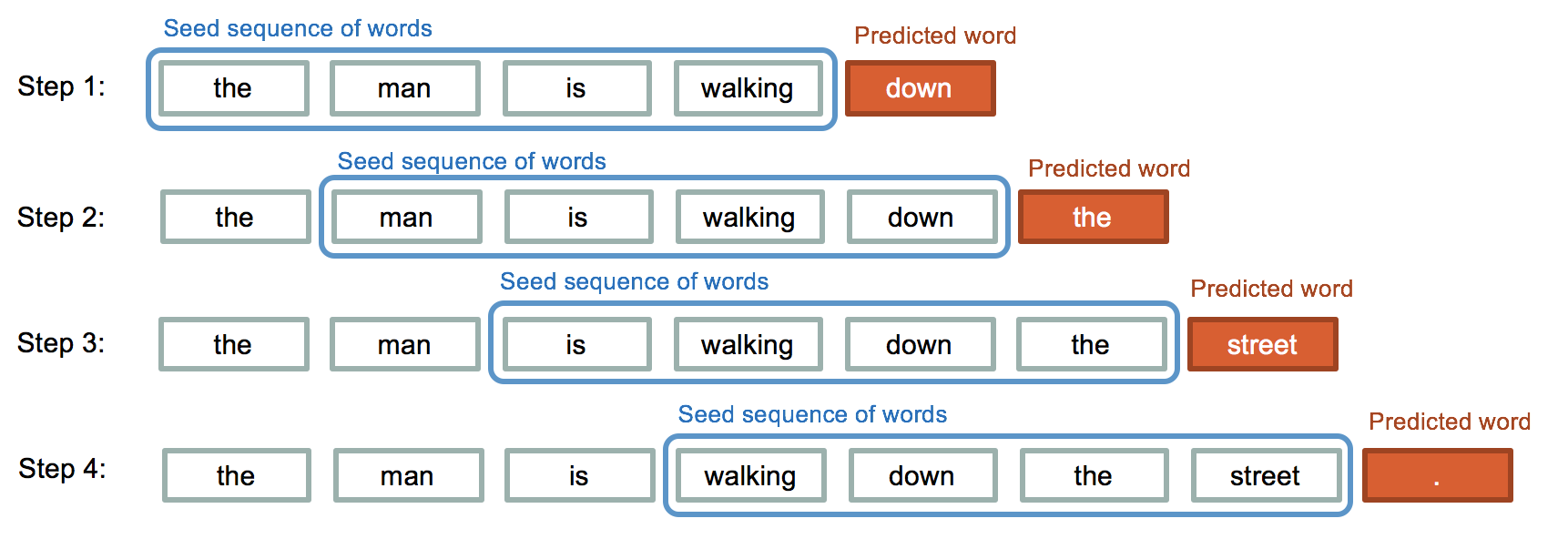

The model also learns how much similarity is between words or characters and calculates the probability of each.

Using that, it predicts or generates the next word or character of sequence.